损失函数

① Loss损失函数一方面计算实际输出和目标之间的差距。

② Loss损失函数另一方面为我们更新输出提供一定的依据。

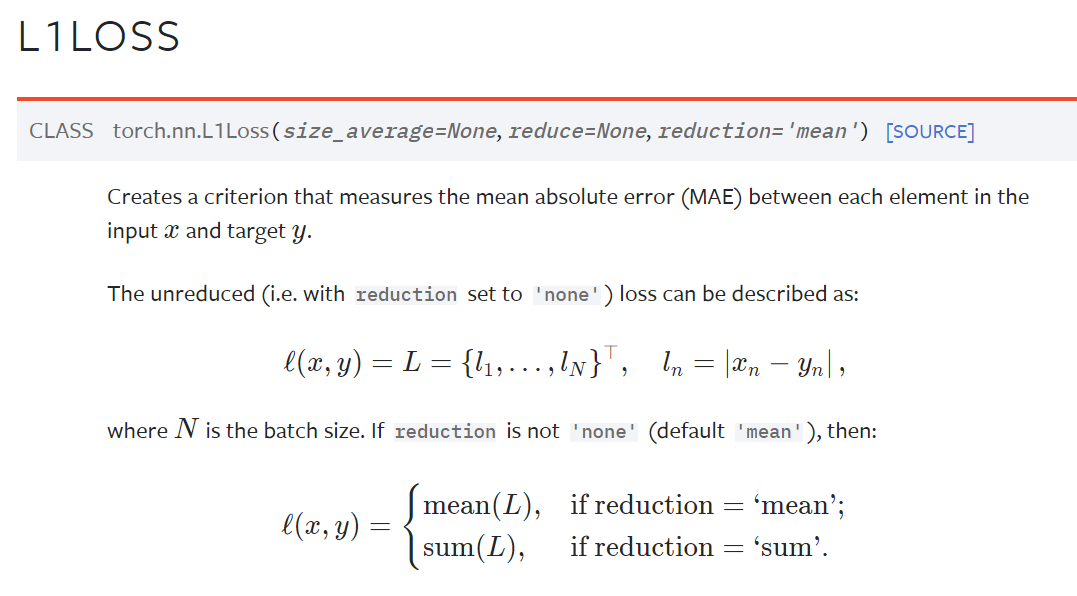

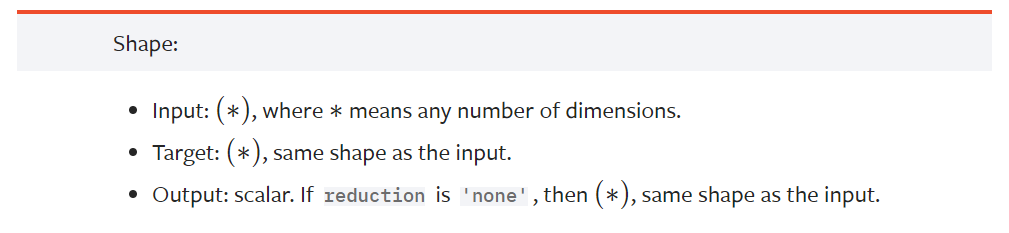

L1loss损失函数

l(x,y)在参数reduction取不同值时进行不同的运算

mean表示平均值

sum求和

例子

X:1, 2, 3

Y:1, 2, 5

L1loss = (0 + 0 + 2)/3 = 0.667

import torch

from torch.nn import L1Loss

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

#shape里面input和target都是*,就没必要reshape了

# inputs = torch.reshape(inputs,(1,1,1,3))

# targets = torch.reshape(targets,(1,1,1,3))

loss = L1Loss() # reduction 默认为 maen

result = loss(inputs,targets)

print(result)输出:tensor(0.6667) 符合计算结果

指定reduction为sum:

import torch

from torch.nn import L1Loss

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss = L1Loss(reduction='sum') # 修改为sum,则计算结果为三个值的差值,然后取和

result = loss(inputs,targets)

print(result)输出:tensor(2.) 符合预期结果

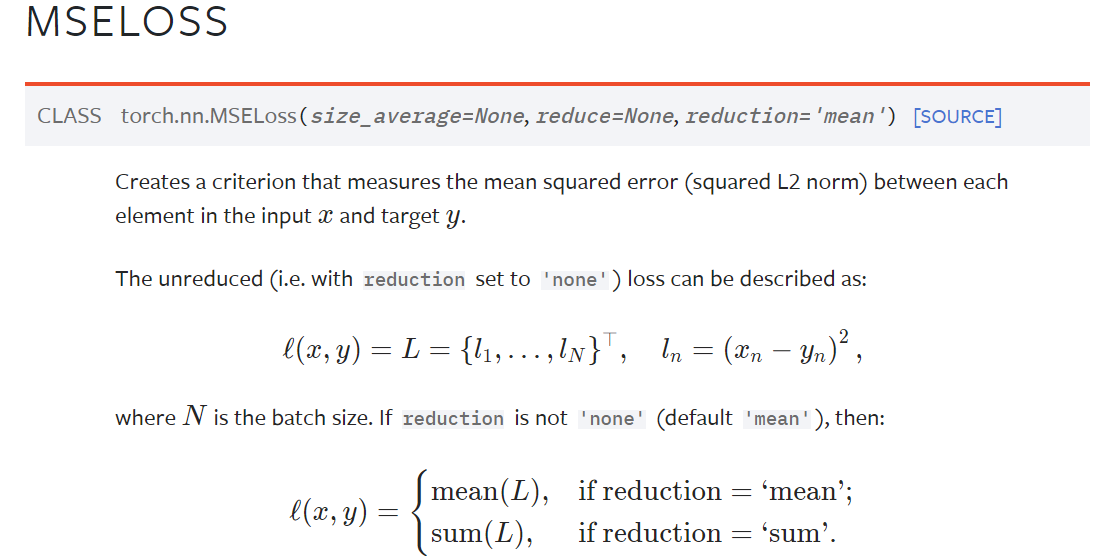

MSE损失函数

nn.MSELoss

数学公式如下,相当于取平方差

例子

X:1, 2, 3

Y:1, 2, 5

L1loss = (0 + 0 + 2^2)/3 = 1.333

import torch

from torch.nn import L1Loss

from torch import nn

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss_mse = nn.MSELoss()

result_mse = loss_mse(inputs,targets)

print(result_mse)输出:tensor(1.3333)

交叉熵损失函数

nn.CrossEntropyLoss

太长了打开看吧👆

主要用于分类问题

例子

| Person | Dog | Cat |

|---|---|---|

| 0 | 1 | 2 |

即0,1,2分别代表人 狗 猫

target 1 目标值为1 即为狗

classes 3 表示分为三类

output = [0.1,0.2,0.3] 表示模型输出的识别结果为 人 狗 猫的概率分别为0.1,0.2,0.3

这里的output是模型的output,是损失函数的input。

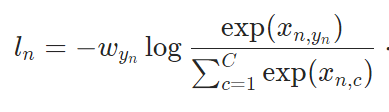

以下公式,官方文档描述:where x is the input, y is the target, w is the weight, C is the number of classes

即w 是权重(可选值), C 是类数,log其实是ln

exp(xn,yn)表示的是target为1(即target为Dog)对应的输入的值(即为0.2)

由此计算可得loss = -ln( exp^(0.2) / (exp(0.1) + exp(0.2) + exp(0.3) ) ) = 1.1019

我们用代码验证结果:

注意:Input: Shape (C), (N,C)

import torch

from torch.nn import L1Loss

from torch import nn

x = torch.tensor([0.1,0.2,0.3])

y = torch.tensor([1])

x = torch.reshape(x,(1,3)) # batch_size为1,有三类

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(x,y)

print(result_cross)输出:tensor(1.1019)

数据集计算损失函数

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,64,5,padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距

print(result_loss)输出:

Files already downloaded and verified

tensor(2.2994, grad_fn=<NllLossBackward0>)

tensor(2.2952, grad_fn=<NllLossBackward0>)

tensor(2.3162, grad_fn=<NllLossBackward0>)

tensor(2.3234, grad_fn=<NllLossBackward0>)

tensor(2.2983, grad_fn=<NllLossBackward0>)

tensor(2.3051, grad_fn=<NllLossBackward0>)

tensor(2.2991, grad_fn=<NllLossBackward0>)损失函数反向传播

反向传播通过梯度来更新参数,使得loss损失最小

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,64,5,padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距

result_loss.backward() # 计算出来的 loss 值有 backward 方法属性,反向传播来计算每个节点的更新的参数。这里查看网络的属性 grad 梯度属性刚开始没有,反向传播计算出来后才有,后面优化器会利用梯度优化网络参数。

print()#用于调试打断点观察优化器

torch.optim

① 损失函数调用backward方法,就可以调用损失函数的反向传播方法,就可以求出我们需要调节的梯度,我们就可以利用我们的优化器就可以根据梯度对参数进行调整,达到整体误差降低的目的。

② 梯度要清零,如果梯度不清零会导致梯度累加。

优化一轮

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,64,5,padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

optim = torch.optim.SGD(tudui.parameters(),lr=0.01) # 随机梯度下降优化器

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距

optim.zero_grad() # 梯度清零

result_loss.backward() # 反向传播,计算损失函数的梯度

optim.step() # 根据梯度,对网络的参数进行调优

print(result_loss) # 对数据只看了一遍,只看了一轮,所以loss下降不大优化多轮

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)

class Tudui(nn.Module):

def __init__(self):

super(Tudui, self).__init__()

self.model1 = Sequential(

Conv2d(3,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,32,5,padding=2),

MaxPool2d(2),

Conv2d(32,64,5,padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024,64),

Linear(64,10)

)

def forward(self, x):

x = self.model1(x)

return x

loss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

optim = torch.optim.SGD(tudui.parameters(),lr=0.01) # 随机梯度下降优化器

for epoch in range(20):

running_loss = 0.0

for data in dataloader:

imgs, targets = data

outputs = tudui(imgs)

result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距

optim.zero_grad() # 梯度清零

result_loss.backward() # 反向传播,计算损失函数的梯度

optim.step() # 根据梯度,对网络的参数进行调优

running_loss = running_loss + result_loss

print(running_loss) # 对这一轮所有误差的总和输出:

Files already downloaded and verified

tensor(358.3089, grad_fn=<AddBackward0>)

tensor(354.2934, grad_fn=<AddBackward0>)

tensor(340.0827, grad_fn=<AddBackward0>)

tensor(317.9955, grad_fn=<AddBackward0>)

tensor(309.5746, grad_fn=<AddBackward0>)

tensor(301.8364, grad_fn=<AddBackward0>)

tensor(292.2207, grad_fn=<AddBackward0>)

tensor(283.9526, grad_fn=<AddBackward0>)

tensor(277.2521, grad_fn=<AddBackward0>)

tensor(271.0922, grad_fn=<AddBackward0>)

tensor(265.5347, grad_fn=<AddBackward0>)